Oracle Smart Flash Cache | Managed IT Services & Support

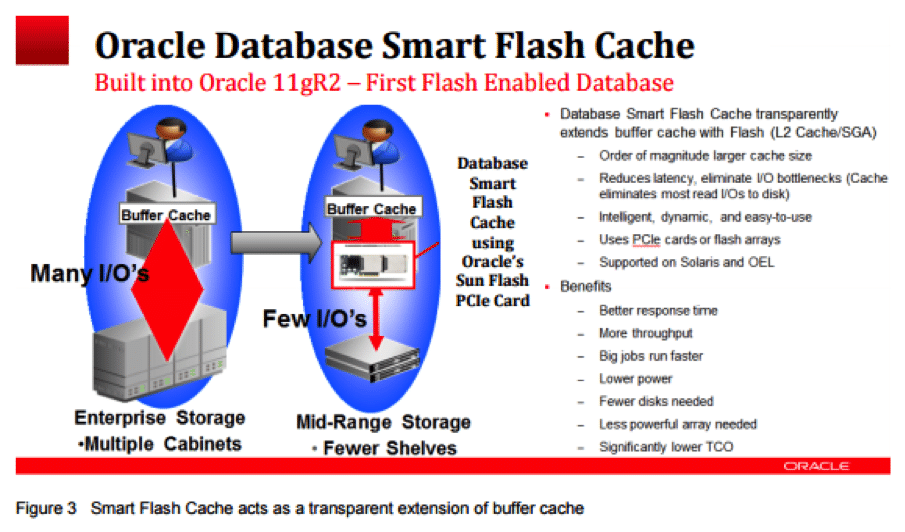

Though the cost of ownership for flash array is on the decline, it is still not at a level to replace all spinning disk arrays yet. Flash disks provide performance improvement magnitudes better than traditional hard disk drives (HDD). Flash eliminates rotational delay and seek time, functions that add latency to traditional storage media. For customers who are not ready to spend on adding too much memory and flash disks to their database servers may utilize the Smart Flash Cache, a feature introduced in Oracle Database 11gR2.

In the Oracle database instance configuration, the data buffer cache is part of the SGA and is kept in the RAM of the server. Though Flash storage is non-volatile, the database smart flash cache is a volatile memory structure of the database used to cache data blocks. In simple terms, smart flash cache is an extension of buffer cache using flash storage.

It is important not to confuse the Database Smart Flash Cache feature of the database (supported on Oracle Enterprise Linux and Solaris platforms) with Exadata Smart Flash Cache. The database SFC is an extension of SGA, whereas the Exadata SFC holds frequently accessed data in very fast flash storage. The Exadata SFC is smart because it knows when to avoid trying to cache data that will never be reused or will not fit in the cache. The Exadata SFC is read/write, but Database SFC is used only for read operations of the database.

When the very first read operation happens, the database block is read from disk to SGA (buffer cache). After sometime, the block is aged out to the smart flash cache. When the block is required again, it is read from the smart flash cache instead of disk into the SGA, thus reducing the I/O operation on the disk.

Database smart flash cache improves read operations, and hence reduces the I/O operations that go on the storage array. Reduced I/O improves the performance of the array as well.

Though database flash cache can be any flash device or LUN from a flash array, better performance is achieved by having PCIe Flash card attached to the server (no need for any interaction with flash array disk controller).

Configuring Database for Smart Flash Cache

Once the PCIe flash card is attached to the server and mounted, you can specify the device location in the database parameter db_flash_cache_file and specify the size of the flash cache using parameter db_flash_cache_size.

- SQL> alter system set db_flash_cache_file=’/dev/raw/sda’ scope=spfile;

- SQL> alter system set db_flash_cache_size=1000g scope=spfile;

- SQL> show parameter flash_cache

NAME TYPE VALUE

————————————————————————————-

db_flash_cache_file string /dev/raw/sda

db_flash_cache_size big integer 1000G

The database parameter filesystemio_options should be SETALL. Oracle also supports ASM diskgroup name as db_flash_cache_file value. If you have mulitiple PCIe flash cards attached to the server, create an external redundancy diskgroup in ASM using the flash devices and use the diskgroup for db_flash_cache_file.

If you are running a RAC database, the device file name or diskgroup must be different for each instance of the RAC database.

Below is an image from an Oracle WHitepaper summarizing the smart flash cache operation:

It is also possible to pin database objects into the flash cache area.

- alter table applsys.fnd_concurrent_requests storage (flash_cache keep);

- alter index applsys.fnd_concurrent_requests_n1 storage (flash_cache keep);

The data dictionary view V$FLASHFILESTAT displays statistics about Database Smart Flash Cache.

Database Smart Flash Cache is an efficient and low cost solution to improve the performance of database by caching more and reducing I/O operations. The smart flash cache technology can make a big difference to application throughput for workloads that are disk bound. The transaction response times are reduced from 5ms range to 0.05ms range.